1. Diverse and Uncertain Representation

•

Representation이 다양한 경우와 불확실한 경우가 있음

•

Multiple vector approach

◦

이러한 경우에 user를 onefold vector로 표현하기는 어려움

▪

Multiple vector로 확장

▪

Disentangled representation learning, capsule networks

◦

DGCF: Orthogonal한 vector가 만들어지도록 유도

•

Density representation

◦

Uncertainty를 더 잘 encoding

◦

Gaussian embedding이라고 볼 수 있음

2. Scalability of GNN in Recommendation

•

기존의 GNN을 그대로 적용하기는 어려움

◦

large memory usage, long training time

•

Reduce size of the graph

◦

Sampling method

◦

GraphSAGE: random sampling

◦

PinSage: random walk

◦

Small subgraph를 만들기도 함

•

Decouple the operations of nonlinearities and collapsing weight matrices

◦

Neighbor-averaged features need to be precomputed only once

◦

limited choice of aggregators and updaters

3. Dynamic Graphs in Recommendation

•

Relationship changes over time

•

GraphSAIL: Only example, incremental learning

4. Reception Field of GNN in Recommendation

•

Graph diffusion-based works: aggregation과 update를 분리

◦

더 큰 reception field에 대비

•

너무 큰 reception field는 oversmoothing problem 야기

•

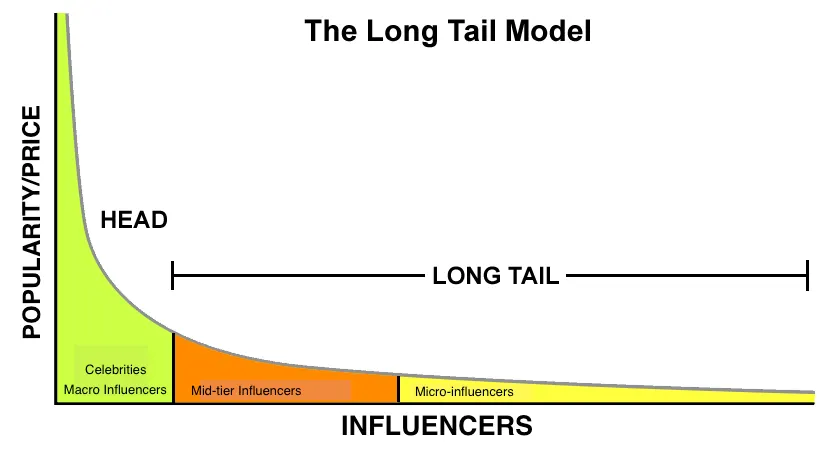

추천에서 node degree distribution은 long tail

•

Adaptive decision-based propagation step

5. Self-supervised Learning

•

데이터의 sparsity 관점에서 유리함

•

COTREC

◦

Contrastive learning task

◦

Maximized agreement

•

DHCN

◦

Maximize mutual information

6. Robustness in GNN-based Recommendation

•

GNN이 noise에 취약함

•

Graph adversarial learning

•

GraphRf

◦

Jointly learn the rating prediction and fraudster detection

7. Privacy Preserving

•

Federated learning 기반으로 해야 함

•

Federated learning과 high-order connectivity 정보를 같이 받는 것이 어려움

•

Pseudo interacted item을 추가하여 privacy 증진 가능

◦

성능이 하락

◦

PPGRec: Differential privacy로 문제 일부해결

8. Fairness in GNN-based Recommender System

•

GNN이 특정 item만 너무 추천하는 경우

•

User demographic에 따라 추천 성능이 너무 달라지는 경우

•

NISER, FairGNN 등의 연구 존재

9. Explainability

•

Instance-level method

◦

Example-specific explanations

◦

Identify important feature

•

Model-level methods

◦

Generic understanding of how deep graph model works